kafka学习一:docker安装kafka

温馨提示:

本文最后更新于 2023年05月11日,已超过 1,115 天没有更新。若文章内的图片失效(无法正常加载),请留言反馈或直接联系我。

kafka

官网:https://kafka.apache.org/33/documentation.html#quickstart

Kafka是由Apache软件基金会开发的一个开源流处理平台,由Scala和Java编写。Kafka是一种高吞吐量的分布式发布订阅消息系统,它可以处理消费者在网站中的所有动作流数据。

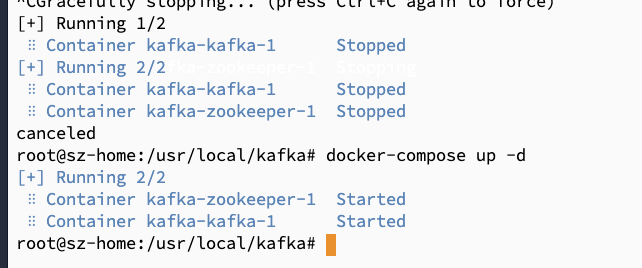

docker安装

curl -sSL https://raw.githubusercontent.com/bitnami/containers/main/bitnami/kafka/docker-compose.yml > docker-compose.yml

docker-compose up -d

记得在防火墙开放9092端口

外网设置

docker-compose文件默认是内网环境访问,如果需要外网访问kafka,需要在docker-compose中额外增加:

environment:

- KAFKA_CFG_ZOOKEEPER_CONNECT=zookeeper:2181

- ALLOW_PLAINTEXT_LISTENER=yes

+ - KAFKA_CFG_LISTENER_SECURITY_PROTOCOL_MAP=CLIENT:PLAINTEXT,EXTERNAL:PLAINTEXT

+ - KAFKA_CFG_LISTENERS=CLIENT://:9092,EXTERNAL://:9093

+ - KAFKA_CFG_ADVERTISED_LISTENERS=CLIENT://kafka:9092,EXTERNAL://{{自己的公网ip}}:9093

+ - KAFKA_CFG_INTER_BROKER_LISTENER_NAME=CLIENT

将上面的KAFKA_CFG_LISTENERS 改为: CLIENT://:9092,EXTERNAL://0.0.0.0:9093

将KAFKA_CFG_ADVERTISED_LISTENERS的改为:CLIENT://kafka:9092,EXTERNAL://{{自己的公网ip}}:9093

最终的compose文件:

version: "2"

services:

zookeeper:

image: docker.io/bitnami/zookeeper:3.8

ports:

- "2181:2181"

volumes:

- "zookeeper_data:/bitnami"

environment:

- ALLOW_ANONYMOUS_LOGIN=yes

kafka:

image: docker.io/bitnami/kafka:3.4

ports:

- "9092:9092"

- "9093:9093"

volumes:

- "kafka_data:/bitnami"

environment:

- KAFKA_CFG_ZOOKEEPER_CONNECT=zookeeper:2181

- ALLOW_PLAINTEXT_LISTENER=yes

- KAFKA_CFG_LISTENER_SECURITY_PROTOCOL_MAP=CLIENT:PLAINTEXT,EXTERNAL:PLAINTEXT

- KAFKA_CFG_LISTENERS=CLIENT://:9092,EXTERNAL://0.0.0.0:9093

- KAFKA_CFG_ADVERTISED_LISTENERS=CLIENT://:9092,EXTERNAL://119.122.111.123:9093

- KAFKA_CFG_INTER_BROKER_LISTENER_NAME=CLIENT

depends_on:

- zookeeper

volumes:

zookeeper_data:

driver: local

kafka_data:

driver: local

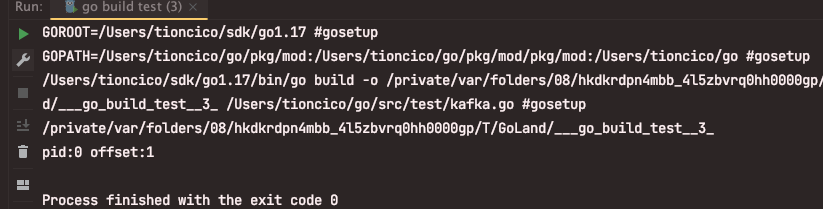

推数据测试

package main

import (

"fmt"

"github.com/Shopify/sarama"

)

func main() {

//kafka配置项

config := sarama.NewConfig()

config.Producer.RequiredAcks = sarama.WaitForAll // 发送完数据需要leader和follow都确认

config.Producer.Partitioner = sarama.NewRandomPartitioner // 新选出一个partition

config.Producer.Return.Successes = true // 成功交付的消息将在success channel返回

// 连接kafka

client, err := sarama.NewSyncProducer([]string{"admin.ddd.cn:9093"}, config)

if err != nil {

fmt.Println("producer closed, err:", err)

return

}

// 构造一个消息

msg := &sarama.ProducerMessage{}

msg.Topic = "web_log"

msg.Value = sarama.StringEncoder("this is a test log")

defer client.Close()

// 发送消息

pid, offset, err := client.SendMessage(msg)

if err != nil {

fmt.Println("send msg failed, err:", err)

return

}

fmt.Printf("pid:%v offset:%v\n", pid, offset)

}

拉数据测试

func consumer() {

consumer, err := sarama.NewConsumer([]string{"admin.321.cn:9093"}, nil)

if err != nil {

fmt.Printf("fail to start consumer, err:%v\n", err)

return

}

partitionList, err := consumer.Partitions("web_log") // 根据topic取到所有的分区

if err != nil {

fmt.Printf("fail to get list of partition:err%v\n", err)

return

}

fmt.Println(partitionList)

for partition := range partitionList { // 遍历所有的分区

// 针对每个分区创建一个对应的分区消费者

pc, err := consumer.ConsumePartition("web_log", int32(partition), sarama.OffsetNewest)

if err != nil {

fmt.Printf("failed to start consumer for partition %d,err:%v\n", partition, err)

return

}

// 异步从每个分区消费信息

go func(sarama.PartitionConsumer) {

defer pc.AsyncClose()

for msg := range pc.Messages() {

fmt.Printf("Partition:%d Offset:%d Key:%v Value:%v \n", msg.Partition, msg.Offset, msg.Key, string(msg.Value))

}

}(pc)

}

}

正文到此结束

- 本文标签: 数据库 服务架构

- 本文链接: https://www.php20.cn/article/417

- 版权声明: 本文由仙士可原创发布,转载请遵循《署名-非商业性使用-相同方式共享 4.0 国际 (CC BY-NC-SA 4.0)》许可协议授权